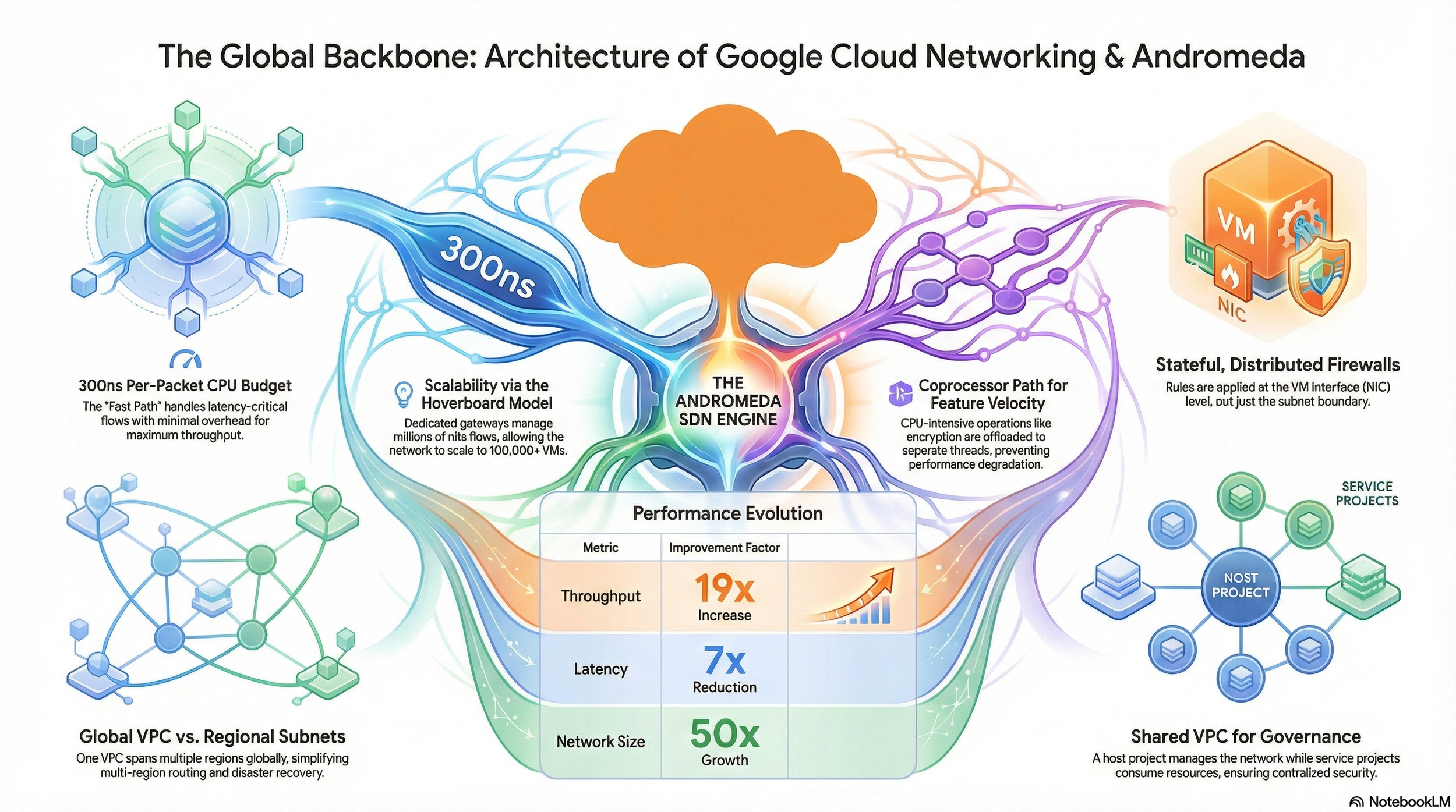

Inside Andromeda: How Google Cloud Achieved a 19x Throughput Boost in Network Virtualization:

Virtual Machine (VM) in the cloud

When you launch a Virtual Machine (VM) in the cloud, the experience is deceptively simple: a few clicks, a short wait, and a terminal prompt appears. Yet beneath that interface lies a staggering engineering feat—a global, elastic fabric that functions as a single digital building. This invisible orchestration is the result of Andromeda, a software-defined networking (SDN) system that redefined the limits of cloud scale. Within this environment, Google Cloud Virtual Private Clouds (VPCs) manage over 100,000 VMs with near-zero latency, treating the entire planet’s worth of data centers as one cohesive unit.

How does an SDN achieve this without crumbling under its own weight? The answer lies in a design philosophy that prioritizes software velocity over rigid silicon. Here are five surprising realities of how the ghost in the machine operates.

1. The 300-Nanosecond Challenge: Performance That Mimics Hardware

Virtualization traditionally introduces a "tax" of overhead, but our goal is to move packets at speeds largely indistinguishable from physical hardware. To achieve this, Andromeda utilizes a sophisticated "Fast Path" design within its host dataplane that employs OS bypass and high-speed polling.

The Fast Path runs on a dedicated logical CPU to avoid the latent death spiral of context switches. Engineers operate under a brutal constraint: a per-packet CPU budget of just 300 nanoseconds. To maintain this hardware-level speed, the system distinguishes between performance-critical tasks and complex, CPU-intensive ones. Performance-critical flows remain on the Fast Path, while "Coprocessor Paths" handle tasks like deep packet inspection or encryption. These coprocessors run in per-VM floating threads, a crucial design choice that ensures fairness and isolation; one tenant’s heavy encryption workload cannot steal cycles from another's Fast Path.

"Our target is to support the same throughput and latency available from the underlying hardware... achieve performance that is competitive with hardware while maintaining the flexibility and velocity of a software-based architecture."

2. The "Hoverboard" Model: Why Your Idle Data Doesn't Slow Me Down

Scaling a control plane to program connectivity for millions of potential VM pairs is a mathematical nightmare. Traditional "preprogrammed" models scale quadratically (N^2); as the number of VMs grows, the overhead explodes until the system chokes on its own routing tables.

Google’s solution is the Hoverboard programming model, which shifts the scaling curve from quadratic to linear. It operates on a technical version of the 80/20 rule: the system only programs high-bandwidth, active communication patterns directly onto the VM hosts. For the "long tail" of low-bandwidth or mostly idle flows, the network uses dedicated gateways known as Hoverboards.

A key architectural insight here is robustness: the system does not rely on Hoverboards to report their own load (as an overloaded gateway is a poor reporter). Instead, it relies on usage reports from the sending VM hosts. When a threshold is crossed, the control plane dynamically "offloads" the flow, programming a direct host-to-host route that bypasses the gateway entirely.

3. Global VPCs vs. Regional Subnets: A Mathematical Paradox

In legacy cloud environments, a VPC is a regional silo. Connecting resources in Virginia to those in Tokyo requires the "Transit Gateway tax"—added architectural complexity, increased latency, and extra costs. Google Cloud flips this logic with a global-by-design architecture: the VPC is Global, while subnets are Regional.

This creates a "flat" global routing environment where a VM in London and a VM in Singapore communicate privately as if they were in the same room. By leveraging Google’s global fiber backbone, we eliminate the need for inter-region peering or transit hubs. The benefits for the Senior Architect are clear:

Simplified Multi-Region Routing: No "Transit Gateways" are required, radically reducing the surface area for routing errors.

Centralized Governance: Security policies and firewall rules are written once and applied globally, ensuring consistent compliance across the entire planet simultaneously.

4. Micro-Security: Firewalls at the NIC, Not the Subnet

Traditional "perimeter security" is a relic. Google Cloud discards the subnet-edge firewall model for a far more granular approach: firewalls are enforced at the individual VM network interface (NIC) level. This is the foundation of true micro-segmentation.

These rules are stateful and operate on a strict hierarchy. The system includes "Implied Rules" (allow all egress, deny all ingress) with a fixed lowest priority of 65535. Custom rules occupy the 0–65534 range, allowing architects to precisely override defaults.

Perhaps the most visionary feature is identity-based security. Instead of fragile rules based on volatile IP ranges, we use Service Accounts. Security policies follow the workload's identity; if a compromised VM is moved or its IP changes, its cryptographic identity remains the anchor for enforcement. In a zero-trust world, this is the only model that scales.

5. The "Hairpin" Trick: How VMs Move Without Dropping a Packet

Transparent VM Live Migration is the "Holy Grail" of cloud maintenance—the ability to rebuild the plane while it is in flight. Google routinely performs weekly non-disruptive upgrades of the entire Andromeda dataplane, swapping out the underlying "hardware" without the user ever detecting a blip.

When a VM migrates to a new host, it enters a "blackout phase" with a median duration of 65ms. To satisfy even the most demanding workloads, the 99th percentile is kept to just 388ms. To prevent packet loss during this handoff, Andromeda uses hairpin flows. The original host temporarily forwards any incoming packets to the new destination. Once the global routing tables settle, the hairpin is removed, and traffic flows directly to the new physical host. This allows us to iterate on the dataplane with the velocity of a startup while maintaining the uptime of a utility.

Conclusion: The Future of the Software-Defined World

The velocity of software-based architectures is now outstripping traditional hardware at an exponential rate. Over a five-year period, the Andromeda stack achieved a 19x improvement in throughput and a 16x gain in CPU efficiency. These leaps were not the result of waiting for a new generation of silicon, but of iterating on more intelligent code.

As we look toward the next decade, the line between hardware and software continues to blur. If software can deliver hardware-level performance with global scale and zero-downtime upgrades, it raises a fundamental question for every IT decision-maker: Is the "hardware" of the future actually just highly optimized code?